Reader Fikri asked:

Now, I’ve been a while trying to understand RACECAR/J and other similar project out there. Please correct me if my understanding is way off. So, there are 2 different approaches to build this project, first nvidia’s way (end to end learning) like what Tobias did and second one is MIT’s or UPENN’s way (RACECAR and F1/10). Is that correct Jim?

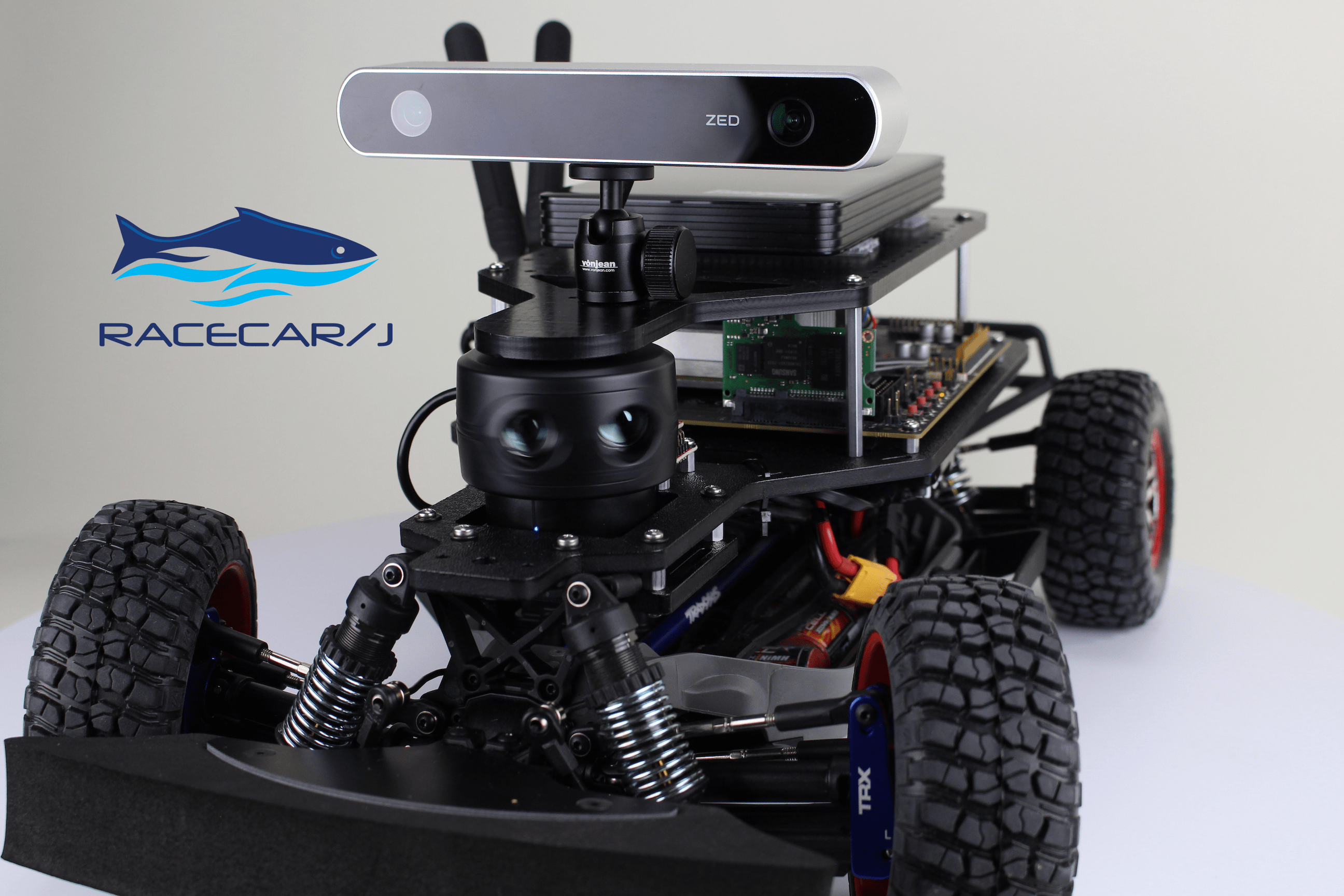

While it’s difficult to speak to the other projects, we can talk about RACECAR/J. As you know, RACECAR/J is a robotic platform built using a 1/10 scale RC car chassis. The computation is done by a NVIDIA Jetson Development Kit. There are sensors attached to the Jetson, as well as a controller to allow the Jetson to adjust the speed of the chassis motor and steering angle of the wheels.

Alan Kay once said, “People who are really serious about software should make their own hardware.” And so it is with robots. RACECAR/J is a very simple robot, but it provides a platform for which to explore self driving vehicles. There are many challenges to getting to the point where a vehicle can drive itself, some can be thought of as very granular. Others are combination of taking different inputs and calculating a course of action.

Let’s examine the intent of RACECAR/J. For people just starting out in ‘robocars’, there are several areas to explore. You’ve probably seen inexpensive line following robots. Once you’re more serious, there are other routes to explore. For example, DIY Robocars is an excellent resource and community for people who want to learn about building their own autonomous vehicles. Many people build their own ‘Donkey Car’ or equivalent with a Raspberry Pi for computing and a camera for a sensor. You can build one of these cars for a couple of hundred dollars. To be clear, this is just one route you can go through in that community.

Once the car is constructed, there are official race tracks where people gather monthly to race against each other. People are exploring different software stacks, some use machine learning, others use computer vision. Introduction of inexpensive LIDARs add another sensor type to those stack. Typically the cars communicate information to a base station, and the base station does additional computation in a feedback loop with the car.

The first question that you have to ask yourself is, “What is the interesting part of this problem?” Typically building one of these robots is fun, but it’s not interesting. The interesting part is creating algorithms that the vehicles use to navigate. This is a new field, there isn’t a “correct” way to do this just yet.

The second question is “How does this prepare me for actually building a self driving car?”. You have probably seen the many articles about different self driving cars and read about all of the different sensors they use. Cameras, radar, lidar, ultrasonic, stereo depth cameras, GPS, IMUs and so on. You also know that the cars have a large amount of specialized computing power on board such as NVIDIA Drive PX (typically 4 Tegra chips on one board, a Jetson has one), FPGAs, ASICs, and other computing components. The full size, autonomous vehicles do all of their computing on board. As you might guess, at Mercedes Benz or any of the other auto manufacturers, they don’t hook up a Raspberry Pi to a camera and call it done.

Which brings us around to building a more complex robot platform. To understand how all this works, you should be familiar with what the issues are. A major area is sensor fusion, taking all of the sensor information and combining it to come up with the next action to take. This includes figuring out when one of the sensors is lying, for example what happens when one of the cameras gets blinded by dirt on the lens?

Motion planning and control is another area of intense research. The vehicle is here, what’s the most efficient way to get to the next point? This reaches all the way down the hardware stack to PID control of the steering and throttle, and all the way up to integrating an autopilot with a GPS route planning system.

There are a variety of ways to think about this. One is a very deterministic approach, similar to that taken in computing in the last 40 years. Using computer vision, calculate the next desired destination point, go there, rinse, repeat until you have arrive at the final destination.

Another approach is that of machine learning. Drive the car around, record everything the sensors ‘see’ and the controls to the steering, throttle and brakes. Play this back to a machine learning training session, and save the result as an inference model. Place the model on the car and watch the car drive itself.

There are many variations thereof. The thing to note however is that you’ll eventually need some type of hardware to test the software on. Simulators are great, but they ain’t real. Full size cars require a very healthy wallet. That’s the purpose of RACECAR/J. The idea is that a widespread adoption of the hardware platform will mean that people will be able to share what they learn about these problems at a price point decidedly less than a full size vehicle. The hardware platform isn’t the interesting part of this, it’s just a few hours of wrenching to put together something to hold the software.

As it turns out, you can implement end-to-end deep learning (which is a relatively new idea), a vision based system, a LIDAR based system or hybrids. At MIT, their first class was mostly built around the IMU and LIDAR on the RACECAR. They are now teaching machine learning autonomous vehicles, the software of which the RACECAR is certainly capable of running. They are also working on a deeper focus with computer vision solutions.

Conceptually, the underlying hardware on the cars mentioned in the original question are the same, some of the implementation details are a little different. The actual software stacks on the cars are different, the Tobias version with end-to-end machine learning versus the more traditional LIDAR based solution. In the press, you’ll hear the (Google) Waymo approach (which is a LIDAR variation) versus the Tesla vision approach. The fact is, it’s still early. As sensors become more mainstream with higher resolution and less cost, you can expect one or two approaches that fit the problem well and be adopted. The real question is how do you access it if you don’t work at one of the big automotive companies?

10 Responses

Thank you so much for your effort Jim! Will definitely read this when I’m home.

Again, thanks Jim!

You’re welcome, and I hope this answers your question.

Hi Jim,

I’m building my Jetson TX2 RACECAR on an ‘old’ RC car which I have turned into a computer controlled car and have the motor and steering working via a control board Motor Drive-L298N in combination with Adafruit 16-Channel 12-bit PWM/Servo Driver – I2C interface – PCA9685.

Now I also want to add the Blackbird 2 3D stereo camera as one of the inputs for the NVIDIA machine learning part for driving the car autonomous.

Do you have any experience with this type of camera? How can I grab the images it produces for the input of NVIDIA?

The ZED camera is to expensive for me so I have the Blackbird as an alternative.

The next hurdle is to find a very cheep LIDAR or equivalent sensor for detecting the surrounding of the car. I have a few ultrasonic sensors but need to fuse these input in some way. Could these Ultrasonic sensors be an alternative?

Keep posting this kind of articles to inspire us with your enthusiasm for robot cars.

Hi Ton,

I don’t have any experience with the Blackbird. It is a FPV NTSC type of camera, which is meant to transmit an analog signal to a receiver. At some point you would have to digitize the signal for using with a computer such as the Jetson. A simple USB webcam is a better alternative to get started.

There are many challenges in sensor selection for these types of projects. For several years people have been using second hand Neato vacuum robot LIDARs as an inexpensive solution. As in most things mechanical, electrical, and software, the design tradeoffs should be made with the understanding of the problem area. An inexpensive LIDAR will need a lot of work to get to minimum performance levels. That may be an ok tradeoff, but the analogy is that you don’t expect a Toyota Corolla to lap a race track as fast as a Ferrari. There are tradeoffs as a designer you have to make.

Just to be clear, when someone wants to do parts substitutions or alternatives and asks me about it, they get the same answer: “It depends on what you’re trying to do”. The goal is to understand what the tradeoffs of a approach might be, the only way you can get that knowledge is to actually play with it yourself. Thanks for reading!

Thanks for the constructive answer!!

I keep reading!

What is the price range for this? and where to buy it please?

We’re still working through the details and logistics to make this into a product. RACECAR/J will be available assembled and in kit form. Prices will start ~ $2600 USD for a kit version without LIDAR with LIDAR options above that. We will announce availability as we get closer to production.

Hi,

From where I can find SDK for RACECAR/J ? Does it come with bundled Python and C/C++ libraries of OpenCV, Tensorflow and ROS to reduce the time spent on device setup for application development? Will it be possible to get this SDK so that I can install and try on TX2 dev kit while waiting for availability of RACECAR/J ?

MIT RACECAR has two depth cameras on board (ZED for “passive”, and structure.io for “active”). Is there an advantage to one over the other, are they both “necessary”, or is it just an additional input into the sensor fusion, for improved confidence?

Each type of sensor has advantages and disadvantages. You might think of a case where a visible light wave length camera is inferior to an infrared camera for example. MIT has used a wide variety of sensors on their RACECARs, 3D LIDAR, 2D Lidar, ultrasonic sensors, radars, Infrared depth cameras, RGBD cameras, and so on. They use the robots both in educational and research environments, so any given configuration is related to the curriculum or research that they are doing at the given time.